Outlier detection is a crucial step in data analysis, especially when dealing with multivariate datasets. Outliers can distort statistical analyses, mislead machine learning models, and obscure meaningful insights. Detecting these anomalies is essential for ensuring data quality and enhancing model performance. In this blog, we will explore two powerful methods for multivariate outlier detection: Elliptic Envelope and Isolation Forests. Whether you’re a seasoned data scientist or just starting your journey through a data analyst course in Pune, understanding these techniques will add significant value to your skillset.

What Are Multivariate Outliers?

Before diving into the techniques, let’s clarify what multivariate outliers are. Unlike univariate outliers, which are extreme values in a single feature, multivariate outliers involve unusual combinations of values across multiple features. These outliers may not appear extreme when viewed in isolation, but they become apparent when considering the relationships between variables.

For example, in a dataset containing height and weight, a data point with average height but extremely low weight may be an outlier when considering the two features together, even though each feature alone looks normal.

Detecting such outliers requires methods that account for the correlation structure among variables.

Why Is Multivariate Outlier Detection Important?

- Improved Model Accuracy: Outliers can skew models, especially those sensitive to extreme values, such as regression or clustering.

- Data Quality Assurance: Outliers often indicate errors in data collection, entry, or transmission.

- Insight Discovery: Some outliers represent rare but significant phenomena worth investigating.

- Robust Statistical Analysis: Many statistical tests usually assume distributed data without outliers.

With these motivations in mind, let’s explore two effective techniques.

Elliptic Envelope: A Classical Approach for Gaussian Data

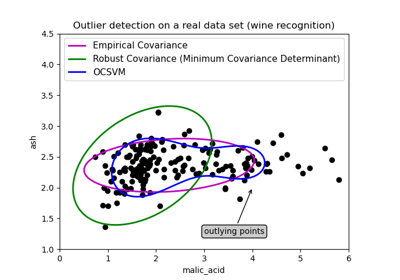

The Elliptic Envelope method assumes that the data follows a multivariate Gaussian distribution. It fits an ellipse (or ellipsoid in higher dimensions) that encompasses the central data points and classifies points outside this envelope as outliers.

How Elliptic Envelope Works?

- Covariance Estimation: It estimates the covariance matrix of the data to understand the spread and relationships between features.

- Centre and Shape: Using this covariance, it defines an ellipse (or ellipsoid) that captures the majority of data points.

- Mahalanobis Distance: Each data point’s distance from the centre of the ellipse is calculated using the Mahalanobis distance, which accounts for the covariance structure.

- Thresholding: Points with distances exceeding a threshold (often determined by a contamination parameter specifying the expected proportion of outliers) are flagged as outliers.

Advantages of Elliptic Envelope

- Works well when the data is approximately Gaussian.

- Considers correlations between variables.

- Provides a clear geometric interpretation.

Limitations

- Sensitive to non-Gaussian data distributions.

- Can be affected by a high number of outliers.

- Assumes a unimodal distribution.

Practical Use in Python

The EllipticEnvelope class from the scikit-learn library implements this method efficiently. Here’s a simple example:

From sklearn.covariance import EllipticEnvelope

import numpy as np

# Sample multivariate data

X = np.array([[1, 2], [2, 3], [3, 4], [10, 10]])

# Fit the model

ee = EllipticEnvelope(contamination=0.1)

ee.fit(X)

# Predict outliers (-1 indicates outlier)

outliers = ee.predict(X)

print(outliers)

In this example, the last point [10, 10] is likely to be identified as an outlier.

Isolation Forest: A Modern, Non-Parametric Technique

Unlike the Elliptic Envelope, the Isolation Forest algorithm is a non-parametric, tree-based method that works well regardless of the underlying data distribution. It isolates outliers instead of profiling normal data points.

How Isolation Forest Works?

- Random Partitioning: It constructs multiple decision trees by randomly selecting features and split values.

- Isolation: Outliers are easier to isolate because they tend to be few and different. Therefore, they require fewer splits to be isolated.

- Path Length: The average path length from the root to a leaf node across trees is used as a measure of normality.

- Scoring: Points with shorter average path lengths are more likely to be outliers.

Advantages of Isolation Forest

- Does not assume any distribution.

- Scales well to high-dimensional datasets.

- Robust against masking and swamping effects caused by multiple outliers.

- Requires less computation compared to some other outlier detection algorithms.

Limitations

- Randomness may cause some variability in results.

- Requires tuning of parameters like the number of trees and sample size.

- Less intuitive interpretation compared to the Elliptic Envelope.

Practical Use in Python

Scikit-learn also provides a straightforward implementation of Isolation Forest:

From sklearn.ensemble import IsolationForest

import numpy as np

X = np.array([[1, 2], [2, 3], [3, 4], [10, 10]])

iso_forest = IsolationForest(contamination=0.1, random_state=42)

iso_forest.fit(X)

outliers = iso_forest.predict(X)

print(outliers)

Points predicted as -1 are outliers.

Comparing Elliptic Envelope and Isolation Forest

| Feature | Elliptic Envelope | Isolation Forest |

| Assumption | Multivariate Gaussian | No assumption on distribution |

| Sensitivity to Outliers | Sensitive | Robust |

| Interpretation | Geometric (ellipsoid) | Tree-based isolation |

| Scalability | Moderate | High |

| Best for | Data with Gaussian distribution | High-dimensional, complex data |

| Computational Complexity | Moderate | Lower |

When to Use Which?

- Use Elliptic Envelope if you have reason to believe your data is Gaussian and want a more interpretable model.

- Use Isolation Forest for larger, complex datasets where distribution assumptions may not hold.

Integration with Your Data Analysis Workflow

Multivariate outlier detection is often part of the exploratory data analysis (EDA) phase. After detecting outliers, you may choose to:

- Remove them to improve model performance.

- Investigate them as potential errors or rare events.

- Use robust models that can handle outliers.

Both Elliptic Envelope and Isolation Forest can be easily integrated into Python workflows, making them valuable tools for aspiring data professionals.

If you’re looking to build proficiency in these methods and many other data science skills, enrolling in a data analysis course in Pune can provide structured learning and hands-on projects. Many courses cover outlier detection techniques, machine learning algorithms, and practical applications in real-world datasets.

Practical Tips for Effective Outlier Detection

- Understand Your Data: Know the domain and expected distributions before choosing a detection method.

- Visualise Multivariate Data: Use dimensionality reduction techniques like PCA or t-SNE to visualise outliers.

- Tune Parameters Carefully: The contamination parameter influences how many points are flagged as outliers.

- Combine Methods: Sometimes using multiple detection methods provides better insights.

- Interpret Results in Context: Not all outliers are bad; some might be valuable, rare cases.

Conclusion

Detecting multivariate outliers is a fundamental step in ensuring the quality and reliability of your data analyses. Both Elliptic Envelope and Isolation Forest provide robust frameworks to identify anomalies effectively. The choice of method depends mainly on your dataset’s characteristics and the goals of your analysis.

Whether you are pursuing a data analytics course or working on real-world datasets, mastering these techniques will significantly enhance your ability to handle complex data challenges. As data continues to grow in volume and complexity, skills in multivariate outlier detection will remain highly relevant in the evolving data landscape.

By gaining expertise in these methods, you not only improve your data quality but also gain deeper insights and build more accurate predictive models. If you want to deepen your knowledge and practical experience, consider joining a reputable data analyst course that covers advanced topics in depth.

Business Name: ExcelR – Data Science, Data Analyst Course Training

Address: 1st Floor, East Court Phoenix Market City, F-02, Clover Park, Viman Nagar, Pune, Maharashtra 411014

Phone Number: 096997 53213

Email Id: enquiry@excelr.com